What Is Low Latency? Streaming Protocols and Architecture

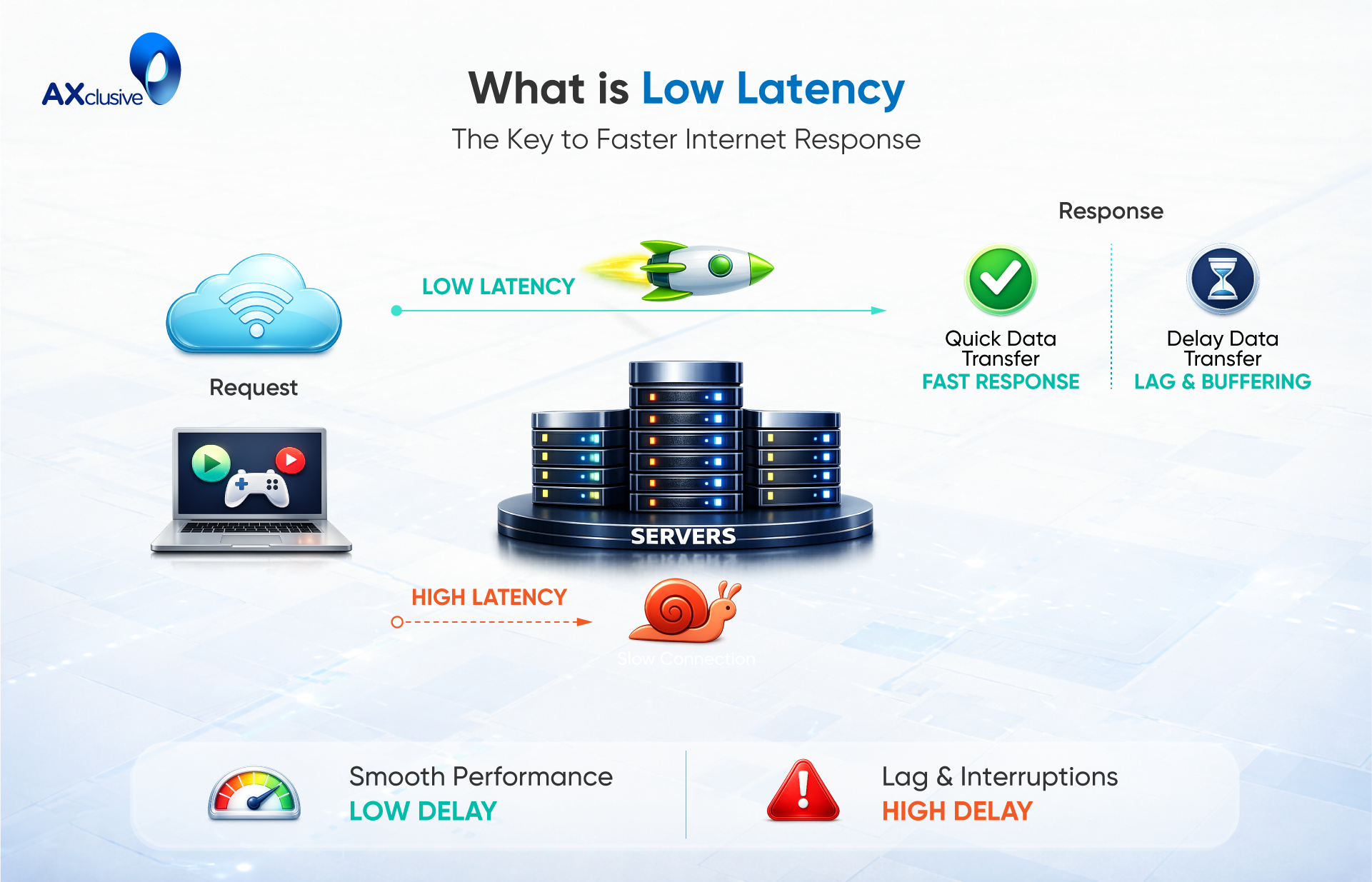

What is low latency? It refers to minimal delay in data transmission, allowing information to move quickly between systems and ensuring smooth, real-time performance. Low latency is essential for applications where speed and responsiveness matter. Join Axclusive ISP to explore more in the article below.

What Is Low Latency?

Low latency describes a system state where the time gap between an action and its resulting response remains very small. From a technical perspective, latency represents the duration required for a data packet to travel from its origin to a target system, be processed, and return a response. This delay is commonly measured in milliseconds, though more demanding environments may measure it in microseconds.

The definition of low latency depends heavily on context and use case. In content streaming platforms, latency measured in a few seconds may still be acceptable for live broadcasts. In contrast, real-time applications such as voice or video communication require much tighter thresholds, where delays typically must stay below 150 milliseconds to preserve natural interaction. In specialized systems such as financial trading platforms, even microsecond-level delays can affect outcomes, making ultra-low latency a critical requirement.

In practice, low latency reflects how efficiently a system handles data transmission, processing, and response delivery. Achieving it requires optimized networks, fast processing paths, and infrastructure designed to minimize delay at every stage of communication.

Applications Where Latency Matters

Latency directly affects how systems respond to user actions. In environments where timing, accuracy, or interaction quality is critical, even small delays can degrade performance or introduce risk. Different applications tolerate different latency thresholds, but all rely on predictable and controlled network behavior. Understanding where latency matters most helps organizations design networks that meet real operational requirements rather than generic performance targets.

Audio and Video Streaming Experiences

Acceptable low latency range: ~1–5 seconds

Live audio and video streaming requires controlled latency to maintain synchronization and enable audience interaction. Platforms hosting live events, webinars, or broadcasts depend on predictable delays so viewers receive content in near real time. Excessive latency disrupts engagement features such as live chat, reactions, and Q&A, reducing the overall experience.

Activity Feeds and Real-Time Updates

Acceptable low latency range: < 1 second to a few seconds

Applications that deliver notifications, alerts, or live updates depend on fast data delivery to remain relevant. Collaboration tools, messaging platforms, and news feeds require low latency to ensure information reaches users while it is still actionable. Delays reduce usefulness and can impact decision-making.

Real-Time Voice and Video Communication

Acceptable low latency range: < 150 ms (one-way)

Voice and video calls require very low latency to preserve natural conversation flow. When delays exceed this threshold, users experience interruptions such as echo, overlapping speech, or awkward pauses. Business communications, customer support, and remote collaboration depend on low latency to maintain clarity and trust.

Telemedicine and Remote Care

Acceptable low latency range: < 150 ms

Healthcare applications rely on low latency for accurate communication between patients and providers. Real-time consultations, remote monitoring, and assisted procedures require timely feedback to avoid misinterpretation or delayed response. Network latency directly impacts clinical effectiveness and patient safety.

Online and Competitive Gaming

Acceptable low latency range: < 100 ms

Gaming platforms demand fast response times to reflect player actions accurately. Low latency ensures responsive controls and synchronized game states, especially in multiplayer and competitive environments. Higher latency introduces lag and inconsistency, affecting fairness and user experience.

Web Browsing Responsiveness

Acceptable low latency range: < 100 ms

Web performance depends on quick request and response cycles. Low latency improves page load times, interaction speed, and overall usability. For content-driven and e-commerce platforms, responsiveness directly influences engagement, retention, and conversion rates.

Augmented and Virtual Reality Environments

Acceptable low latency range: < 20 ms

AR and VR systems require extremely low latency to align visual output with user movement. Delays can cause motion mismatch, discomfort, or loss of immersion. Low latency is essential for realistic simulations, training systems, and interactive virtual experiences.

Financial Trading Systems

Acceptable low latency range: ~1–10 microseconds

Financial trading, particularly high-frequency trading, operates at microsecond timescales. Ultra-low latency enables faster market data processing and trade execution. Even minimal delays can affect pricing outcomes, making optimized network infrastructure critical in these environments.

What Causes Latency in Networks

Network latency occurs because data requires time to be processed and delivered from one location to another. Even digital traffic must pass through multiple technical stages before reaching the end user, and each stage introduces delay.

A common cause of latency is data processing. Information often needs to be encoded, compressed, or reformatted before transmission. In media and application delivery, data may also be modified or routed through intermediate servers, which adds processing time.

Network congestion increases latency when many data flows compete for limited bandwidth. Similar to traffic bottlenecks, packets may queue or slow down when network capacity is constrained. Fluctuating bandwidth conditions can further increase delays.

Physical distance also impacts latency. The farther data travels, the longer it takes to arrive. Content delivery networks help reduce this effect by serving data from locations closer to users.Together, processing overhead, congestion, bandwidth limits, and distance are the main factors that contribute to network latency.

Protocols Used for Low-Latency Streaming

Low-latency streaming depends on protocols designed to minimize delay while maintaining stability and media quality. Each protocol addresses different delivery challenges, such as unreliable networks, browser compatibility, scalability, or real-time interaction. Selecting the right protocol depends on the use case, audience size, and latency tolerance.

Secure Reliable Transport (SRT)

Secure Reliable Transport focuses on delivering video reliably across unpredictable networks. Built on UDP principles, it corrects packet loss and jitter during transmission, making it suitable for long-distance contribution and remote production workflows.

SRT is commonly used for ingest rather than playback. Media streams are often delivered to a central platform using SRT, then converted into other formats for distribution. While adoption continues to grow, native playback support remains limited.

Key strengths

- Open and vendor-neutral

- Handles packet loss and unstable network conditions

- Maintains consistent video quality at low latency

Key constraints

- Limited native player support

- Requires additional processing for end-user delivery

WebRTC for Real-Time Media

WebRTC enables near real-time media delivery directly in web browsers without plugins. It supports interactive communication such as live collaboration, conferencing, and audience participation with very low end-to-end delay.

Originally designed for peer-to-peer use, WebRTC scales less efficiently than HTTP-based protocols. However, modern streaming platforms extend WebRTC to support large audiences while preserving interactivity.

Key strengths

- Browser-based and standards-driven

- Supports sub-second latency

- Enables real-time interaction

Key constraints

- More complex to scale

- Less suited for traditional broadcast workflows

Low-Latency HLS Delivery

Low-Latency HLS extends the widely adopted HLS standard to reduce delay while retaining compatibility with existing players and CDNs. It enables faster segment delivery and partial segment playback, allowing viewers to see content closer to real time.

This approach works well for large-scale live streaming where moderate latency reduction is sufficient.

Key strengths

- Broad device and player support

- Scales efficiently using CDNs

Suitable for interactive live events

Key constraints

- Latency remains higher than real-time protocols

- Not designed for ultra-low latency use cases

Low-Latency DASH Streaming

Low-Latency DASH delivers reduced latency using chunked media transfer and CMAF formatting. It offers similar benefits to HLS while supporting open standards within the DASH ecosystem.

Device compatibility varies by platform, which can limit deployment options in certain environments.

Key strengths

- Standards-based adaptive streaming

- Efficient delivery at scale

Key constraints

- Limited playback support on some platforms

- Less universal than HLS

Real-Time Messaging Protocol (RTMP)

RTMP remains widely used for live stream contribution due to its stable and low-delay ingest performance. Many media platforms still require RTMP for sending content from encoders to streaming services.

However, RTMP no longer serves as a complete delivery solution due to lack of support on modern playback devices.

Key strengths

- Reliable and well-supported for ingest

- Compatible with most encoders

Key constraints

- Not suitable for end-user playback

- Gradually replaced by newer protocols

Low Latency Network Architecture Components

Low-latency networks are built through a combination of hardware, software, and operational improvements that reduce delay across the entire data path. Instead of relying on a single optimization, these architectures focus on minimizing processing time, accelerating packet handling, and improving visibility at each layer of the network. The following components form the foundation of modern low-latency network design.

Programmable Network Platforms

Programmable network platforms allow engineers to modify network behavior through software rather than fixed hardware logic. This flexibility enables faster deployment of performance optimizations, custom routing rules, and traffic-handling features that reduce unnecessary processing delays. By adapting network behavior to application requirements, programmable platforms help maintain low latency under changing workloads.

Smart Network Interface Cards

Smart network interface cards, or SmartNICs, offload network processing tasks from the main CPU to dedicated hardware. By handling packet filtering, encryption, and traffic steering directly on the card, SmartNICs reduce processing overhead and improve response times. Their programmable nature supports ultra-low latency use cases in data centers and high-performance environments.

Firmware Development Toolkits

Firmware development toolkits provide structured frameworks for building custom logic on programmable switches and SmartNICs. These toolkits allow developers to embed application-specific functions directly into network devices, reducing the need for additional processing layers and lowering end-to-end latency.

FPGA-Based Processing Solutions

FPGA-based solutions enable hardware-level customization of data processing paths. By tailoring logic to specific workloads, FPGA devices minimize instruction cycles and deliver predictable, low-latency performance. This approach is widely used in environments where timing precision is critical.

High-Speed Storage Area Networks

High-speed storage area networks support rapid access to shared data across data center systems. By using dedicated, high-throughput links, SANs reduce storage access delays and prevent bottlenecks that can increase application latency.

IT Operations and Monitoring Platforms

IT operations and monitoring platforms provide centralized insight into network, system, and application performance. Continuous monitoring helps teams detect latency issues early, optimize resource allocation, and maintain consistent low-latency performance across complex infrastructures.

Frequently Asked Questions

Is lower latency always better?

Lower latency generally improves responsiveness and user experience, especially for real-time applications such as video calls, gaming, and financial systems. However, ultra-low latency is not necessary for every use case. Some applications, like on-demand video streaming or file downloads, can perform well with higher latency if stability and throughput remain consistent.

How does low latency differ from high latency?

Low latency refers to short delays between sending a request and receiving a response, resulting in fast and smooth interactions. High latency involves longer delays, which can cause lag, buffering, or slow system responses. The impact depends on the application, with real-time systems being more sensitive to latency differences.

How do I turn off low latency?

Low latency is not a simple on-or-off setting. It is a result of network configuration, infrastructure design, and application behavior. Users can indirectly increase latency by disabling performance optimizations, using slower connections, or routing traffic through distant servers, but this is rarely recommended.

What is latency in simple terms?

Latency describes how long it takes for data to travel from one point to another and back. In everyday terms, it is the delay between an action, such as clicking a button, and seeing the result on the screen.

Low latency remains a key requirement for systems that depend on speed, accuracy, and real-time interaction. Through the topics covered above, Axclusive has outlined where latency matters most, what causes it, and the technologies used to reduce it. A clear understanding of low latency helps organizations make informed decisions when designing networks and applications that deliver consistent, high-quality performance in today’s digital environments.

Back to blog